Writing effective agile user stories and acceptance criteria requires treating them as a conversation tool rather than detailed specifications. A user story should be a brief statement describing a feature from the user’s perspective, while acceptance criteria define the measurable conditions that make that story complete. For example, instead of writing “build a login form,” an effective user story would be “As a new user, I want to log in with my email so that I can access my account,” paired with acceptance criteria like “User can enter email and password,” “System displays error if credentials are wrong,” and “User is redirected to dashboard on successful login.” This approach bridges the gap between business needs and technical implementation.

The most common mistake teams make is confusing user stories with task lists or creating acceptance criteria so vague they become meaningless. When stories lack clarity, developers spend time guessing requirements, and teams end up debating what “complete” actually means during code review. The difference between a throwaway user story and one that drives productive development comes down to whether it establishes a shared understanding of value and clear, testable boundaries around what the feature must accomplish.

Table of Contents

- What Makes a User Story Different from Traditional Requirements?

- Structuring User Stories for Clarity and Team Alignment

- Acceptance Criteria as the Bridge Between Business and Development

- Writing Acceptance Criteria That Prevent Scope Creep

- Common Pitfalls in User Stories and How to Avoid Them

- Using Acceptance Criteria in Testing and Quality Assurance

- Scaling User Stories Across Large Teams and Complex Domains

- Conclusion

- Frequently Asked Questions

What Makes a User Story Different from Traditional Requirements?

user stories emerged from extreme programming as a reaction to 50-page specification documents that nobody read and everything changed anyway. Traditional requirements treat software development like manufacturing: specify everything upfront, then execute. User stories assume the opposite—that what you’ll learn building the feature is at least as important as what you know today. A traditional requirement might say “The system shall support up to 1,000 concurrent users with a response time under 200ms.” A user story says “As an operations manager, I want to monitor system performance so that I can detect issues before users complain,” which opens a conversation about what “monitoring” means and what metrics matter. The practical difference is that user stories are sized for completion in a sprint (typically one to five days of work), while traditional requirements often describe functionality that would take months to build.

User stories also explicitly acknowledge that you don’t have all the answers. The story title and acceptance criteria establish a contract about what will be tested, but the team discusses details during sprint planning, not in a static document. This creates feedback loops where the developer, tester, and product owner can raise questions in real time instead of discovering misalignment weeks later. A limitation of user stories is that they can obscure complex architectural decisions. If your story is “As a content manager, I want to upload images that are automatically optimized,” acceptance criteria might specify “Image loads within 2 seconds on mobile.” But nobody documenting the story might realize that auto-optimization requires adding a background job system, new CDN configuration, or changes to your deployment pipeline. For complex systems, you sometimes need supplementary documentation or spike user stories to explore unknowns before writing implementation stories.

Structuring User Stories for Clarity and Team Alignment

The format “As a [user role], I want [feature], so that [benefit]” isn’t a rigid rule—it’s a thinking tool. It forces you to identify who benefits, what they’re asking for, and why. In practice, teams find that enforcing this format reveals stories that are poorly thought through. If you can’t easily fill in the blanks, the story probably needs more discussion. For a payment system, you might write “As a customer, I want to save my payment methods so that I can check out faster,” which immediately tells you the story is about convenience and repeat customers, not security. That context matters because it affects how you approach the implementation and what success looks like. The challenge with user stories is that they’re prone to ambiguity without clear acceptance criteria.

Different people imagine different things when they read a story. One developer might think “save payment methods” means store them in the database; another thinks it means integrate with a payment vault. Acceptance criteria close this gap by describing observable behaviors: “Customer can save a payment method after entering card details,” “Saved methods appear in a dropdown on checkout,” “Customers can delete saved payment methods.” Each criterion should be testable by a human or an automated test—if your criterion can’t be verified, it’s too vague. A warning: writing overly detailed acceptance criteria defeats the purpose of agile development. If you document every edge case and validation rule in acceptance criteria, you’ve essentially written a specification that might be outdated before the sprint starts. The right balance is to document the happy path and obvious edge cases (what happens when a payment fails, what happens if the customer deletes all saved methods), but leave room for the developer to discuss unusual scenarios during sprint implementation. If you lock in too much detail, you reduce the flexibility that makes agile valuable in the first place.

Acceptance Criteria as the Bridge Between Business and Development

Acceptance criteria translate business intent into testable conditions. When a product manager says “customers need better performance,” that’s a business need but not something you can test. Acceptance criteria convert it into something measurable: “The checkout page loads in under 3 seconds on 4G networks,” “Search results appear within 2 seconds of typing,” “Images are fully rendered before interactive elements load.” This translation is where miscommunication often happens, because business stakeholders think in outcomes while developers think in implementation details. The practical value of good acceptance criteria shows up in code review. If a developer marks a story as done and the reviewer has to interpret the criteria, something is wrong. Criteria should be specific enough that two reasonable people evaluating the same code would agree on whether the story is complete.

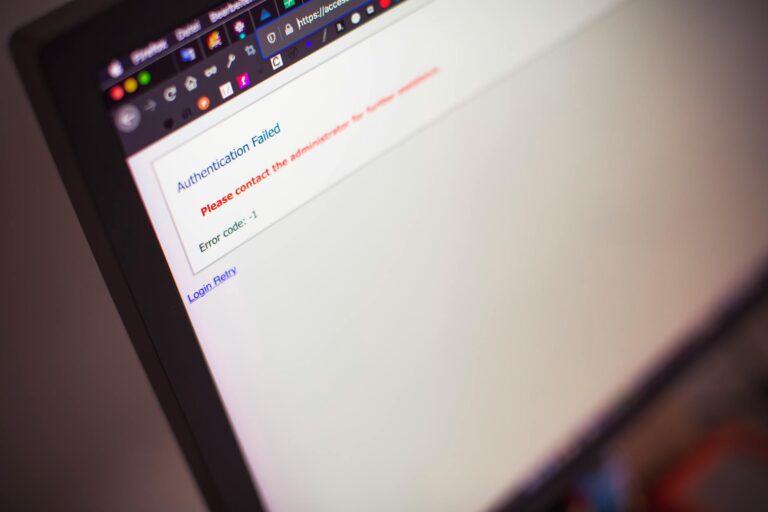

For example, “the form validates user input” is not a criterion—”the form prevents submission if email is invalid and displays ‘Please enter a valid email address'” is. The second one tells you exactly what behavior to look for. A limitation that teams often overlook: acceptance criteria describe what you’re testing, not how you’re testing it. Some teams write acceptance criteria like “unit tests are written,” which blurs the boundary between acceptance criteria and implementation choices. Your criteria should be about user-visible behavior and business value, not about the test framework you’re using. Let the development team choose whether to use unit tests, integration tests, or manual testing. Your criteria specify what must work; the team decides how to verify it.

Writing Acceptance Criteria That Prevent Scope Creep

Vague acceptance criteria are a primary source of scope creep because there’s always something else you could add. If your criteria don’t explicitly exclude things, teams build them anyway. For a notification feature, you might write “Users receive notifications,” which opens the door to debates about email notifications, push notifications, SMS, notification preferences, notification history, and more. Better criteria are “Users receive an in-app notification immediately when someone likes their post” and “Users can dismiss notifications individually.” You’ve now specified the delivery method, the trigger, and the user control—and you’ve implicitly said that email or SMS are out of scope for this story. The tradeoff is between clarity and over-specification. Writing too many criteria makes your story harder to complete and more vulnerable to changing requirements.

A good heuristic is that if you have more than five acceptance criteria, your story is probably too big. Consider splitting it. “As a user, I want to manage my notification settings” is actually five stories: muting notifications, setting frequency, choosing delivery methods, viewing notification history, and setting up filters. Each could be its own story with two or three focused criteria. When you’re writing criteria, ask yourself: “Would we ship this without this criterion?” If the answer is yes, drop it or move it to a future story. This keeps each story focused on delivering one piece of value rather than trying to solve everything at once. Many teams find that stories are too ambitious early in a project and become appropriately sized as the team learns its own velocity.

Common Pitfalls in User Stories and How to Avoid Them

The biggest pitfall is treating user stories as individual units of work that developers own in isolation. Stories are supposed to generate conversations. When a developer picks up a story and implements it without talking to the product owner or tester, you lose the benefits of the agile approach. A developer might build something technically correct but missing some key use case because they didn’t ask questions. Make space in your process for developers to clarify stories with product owners during the sprint, not just during planning. Another warning: user stories that focus on implementation rather than user value.

“As a developer, I want to refactor the authentication service” is a task, not a user story. User stories describe value to the end user. Technical debt and refactoring are necessary, but they should either be represented as technical stories with clear outcomes (like “improve login speed to under 500ms”) or handled in a dedicated refactoring sprint, not mixed into feature stories where they’re invisible to stakeholders. A subtle pitfall is accepting stories that don’t map to a real user. Legitimate users in your system include customers, admins, content managers, and support staff—but if a story is for “the system,” you should rewrite it to be from a specific perspective. And watch for stories that are actually dependencies on other features. “As a user, I want to see my profile” is fine, but if there’s an implicit assumption that users are already logged in and have profiles created, you might be missing upstream stories that establish those capabilities.

Using Acceptance Criteria in Testing and Quality Assurance

Acceptance criteria become your test plan. A QA engineer reading acceptance criteria for a checkout flow should be able to immediately write test cases without needing additional specifications. If a criterion says “users can apply a coupon code to reduce the total,” a tester knows to verify that entering a valid code reduces the price, entering an invalid code shows an error, expired codes are rejected, and the discount is applied to the correct items. Each criterion spawns multiple test cases.

The benefit of this connection is that it makes testing more systematic. Instead of QA having to invent test scenarios from thin air, they’re testing against specific, pre-agreed conditions. This also makes it easier to track test coverage. If you have ten acceptance criteria and you’ve tested nine of them, you know exactly what’s missing. For development teams with tight timelines, this clarity is invaluable—it prevents the situation where you ship code, discover something untested, and have to rush a fix.

Scaling User Stories Across Large Teams and Complex Domains

As projects grow, teams often struggle with how detailed to make user stories. Large organizations sometimes pile on so many criteria and supplementary documentation that stories become waterfall-style specifications with “agile” framing. The key is distinguishing between acceptance criteria (what must be true for the story to be done) and supporting documentation (architecture decisions, API contracts, performance requirements). Acceptance criteria live in your tracking system; supporting docs belong in a shared wiki or architecture decision log where they’re easier to update and reference across multiple stories.

For complex domains like fintech or healthcare, regulations sometimes force you to document more than you’d otherwise want. A story about a payment can’t sidestep compliance requirements with vague criteria. In these cases, write acceptance criteria that reference the applicable regulations: “Transactions are logged according to SOX compliance requirements” with a link to the policy document, rather than trying to spell out every detail. This keeps criteria readable while ensuring compliance is in scope.

Conclusion

Writing effective user stories and acceptance criteria is a skill that teams develop over time. The core insight is that stories are communication tools, not specification documents. They work best when they establish enough clarity that a developer, tester, and product owner can work toward the same definition of done, but leave room for the team to solve problems together rather than debating pre-written requirements.

Start with the format (As a, I want, so that), ensure each story has focused acceptance criteria, and limit criteria to things that directly affect user value or testing. This approach ships features faster, reduces rework, and makes your team more responsive to changing requirements. The next step is to calibrate story size to your team’s actual velocity and iterate on how you write criteria based on what causes rework or confusion in your sprints. After two or three cycles, you’ll find a natural rhythm for how much detail you need, and your stories will become a reliable bridge between business needs and technical implementation.

Frequently Asked Questions

How many acceptance criteria should a user story have?

Typically two to five. If you have more than five, your story is probably too large. Each criterion should be testable and independent enough that the story could theoretically be split if needed. More isn’t better; clarity is what matters.

What’s the difference between acceptance criteria and a task list?

Acceptance criteria describe observable user behavior and outcomes. A task list describes how the team will accomplish those outcomes. Don’t put “write unit tests” in acceptance criteria—put “users can filter results by category” and let the team decide how to test that behavior.

Should we write acceptance criteria before or after sprint planning?

Before sprint planning, write rough criteria during backlog refinement so the team understands scope. After sprint planning, you might refine or add details as the team asks questions. Criteria evolve as your understanding improves.

Can acceptance criteria change during a sprint?

Changes should be rare and justified. If requirements change mid-sprint, you’ve either discovered something important (in which case you might pull the story and re-plan) or someone didn’t communicate clearly before the sprint started. Either way, it’s a signal to improve your planning process.

How do we handle stories that span multiple technical layers?

Write one story from the user’s perspective, but let the team break it into technical tasks or subtasks if needed. A story like “As a customer, I want to search for products” might involve database queries, API endpoints, and UI components, but it’s still one user story with one set of acceptance criteria.

What if a story depends on another story?

Make that dependency explicit in your backlog management tool. Don’t hide dependencies in vague criteria. If story B can’t start until story A is done, the product owner and team should know and plan accordingly.