JIRA reports improve team performance by providing real-time visibility into work completion rates, bottlenecks, and capacity constraints. Rather than relying on gut feelings or status meetings, teams using JIRA reports make data-driven decisions about workload management and process optimization. A software development team, for example, can run a Velocity Report at the end of each sprint to track how many story points they completed over the past five sprints, then use that average to forecast how much work they can realistically commit to in future sprints.

This shifts team planning from guesswork to evidence-based forecasting. The core insight is simple: the reports JIRA provides—Velocity Reports, Burndown Charts, and Cycle Time tracking—expose patterns that would otherwise remain invisible. Teams that actively monitor these metrics catch slowdowns early, adjust workflows before deadlines slip, and improve their ability to deliver consistent results over time.

Table of Contents

- What JIRA Reports Show About Your Team’s Work

- Understanding Burndown Charts and Real-Time Sprint Visibility

- Cycle Time and Identifying Workflow Bottlenecks

- Work in Progress Limits as a Performance Lever

- Manual Ticket Hygiene and the Limits of Automation

- Velocity Reports for Capacity Planning and Forecasting

- The Evolution of JIRA Reporting and Future Readiness

- Conclusion

What JIRA Reports Show About Your Team’s Work

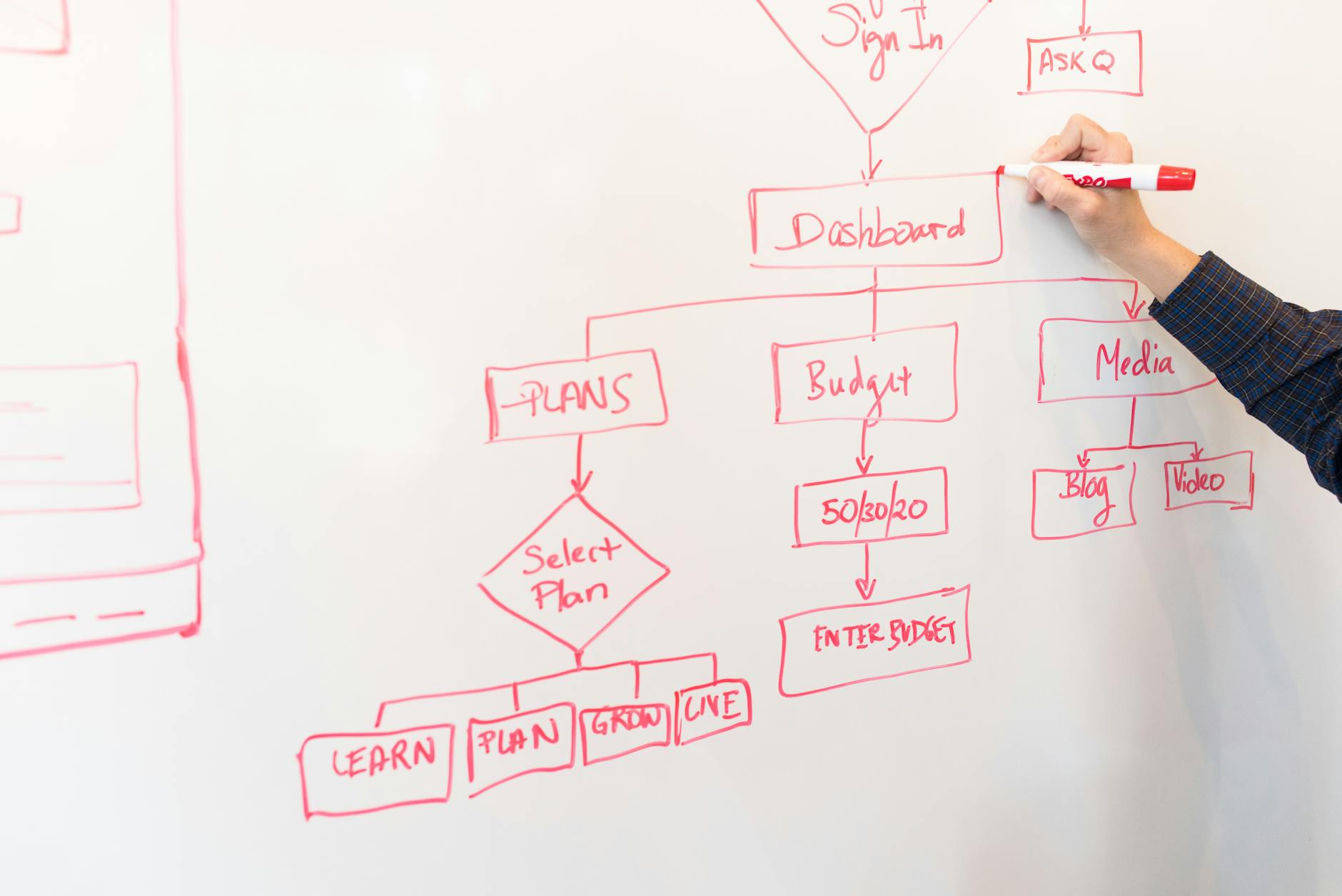

Velocity Reports form the foundation of sprint-based planning. A Velocity Report calculates the average number of story points (or hours, or whatever metric your team uses) that your team completes across multiple sprints. If your team completed 45 points in sprint one, 50 points in sprint two, and 48 points in sprint three, your three-sprint average is approximately 47.67 points. This becomes your baseline for capacity planning. Instead of a product manager optimistically loading 100 points into the next sprint and watching the team miss their goal, they can commit to 48 points and confidently hit the target. Burndown Charts display the remaining work in a sprint as the sprint progresses.

The y-axis shows story points remaining, the x-axis shows days elapsed. The ideal burndown line slopes downward at a steady rate, reaching zero by sprint end. Real burndowns often look different: they might plateau for three days while a team unblocks a critical dependency, then drop sharply once the blocker clears. That visual pattern tells you something a status update never could—you can see exactly when the team hit friction and when they recovered. Cycle time Tracking measures how long individual tasks take from the moment someone creates them until they’re marked complete. If your average cycle time for a bug fix is two days but one specific bug took fifteen days, Cycle Time reports help you investigate why. Was the bug complex? Did it get deprioritized repeatedly? Did someone lack the context to solve it quickly? These questions lead to real improvements, not just complaints.

Understanding Burndown Charts and Real-Time Sprint Visibility

Burndown Charts serve a practical function: they let teams respond to trends while a sprint is still in progress. If the burndown line is falling behind the ideal slope halfway through the sprint, the team can pull together in a standup and ask whether new work should be added, whether someone needs help, or whether the original commitment was too optimistic. Without that real-time data, you discover the problem on the last day of the sprint when it’s too late to adjust. However, Burndown Charts depend heavily on teams maintaining accurate ticket data. If tasks aren’t created consistently, aren’t moved through statuses, or contain inflated estimates, the burndown line becomes meaningless—it might show progress when none has occurred.

A team where some people log work immediately and others update tickets weekly will have a chaotic, unreliable burndown that misleads more than it informs. The report is only as good as the discipline behind it. Burndown Charts also struggle with mid-sprint scope changes. If a client requests a critical feature during a sprint and the team adds thirty points of work, the burndown becomes harder to interpret. Did the team slow down, or did the sprint just get bigger? jira records the change, but the visual narrative becomes confusing for stakeholders who aren’t paying close attention.

Cycle Time and Identifying Workflow Bottlenecks

Cycle Time Tracking reveals where tasks linger. If most features take 5 days from creation to completion but code review adds another 4 days after development is done, your Cycle Time report shows that code review is a bottleneck. The data prompts a conversation: Do you need more reviewers? Should you reduce the scope of reviews? Can junior developers pair with seniors to accelerate the learning curve? Consider a real scenario: A QA team notices through Cycle Time reports that bugs in one particular subsystem take twice as long to fix compared to bugs elsewhere. Investigation reveals that subsystem has poor test coverage, so engineers spend extra time manually verifying their fixes. This insight leads to an initiative to improve test coverage, which pays dividends for months afterward.

Without Cycle Time data, that subsystem might have remained a perpetual drag on the team. A limitation of Cycle Time tracking is that it measures elapsed time, not effort. A task that sits unstarted for three days counts the same as three days of active work. This makes Cycle Time useful for identifying delays and blockers, but less useful for assessing individual productivity or team workload. You need additional context to distinguish “the task was hard” from “the task was waiting for a decision.”.

Work in Progress Limits as a Performance Lever

Work in Progress (WIP) limits restrict how many tasks a team member or team can actively work on at once. If you set a WIP limit of three, a developer can’t start a fourth task until one of the first three is complete. This sounds restrictive, but it dramatically improves focus and throughput. Task-switching costs time—when you interrupt development to jump to a different task, your brain needs time to reorient. WIP limits force serial completion over parallel chaos. JIRA reports help you find the right WIP limit for your context.

If you set a limit of two and your team members spend most of their day waiting for dependencies, you’ve set the limit too low. If you set a limit of ten and your Cycle Time reports show an average task now takes twice as long as it used to, you’ve set it too high. By testing different limits and observing the impact on Cycle Time and completion rates, you discover the sweet spot for your team and workflow. The tradeoff is that WIP limits can feel constraining initially. Developers accustomed to working on many projects simultaneously may perceive them as micromanagement. Teams that adopt WIP limits typically report better results after a few weeks of adjustment, once the brain-context-switching penalty disappears. But the adjustment period is real and requires buy-in from the team.

Manual Ticket Hygiene and the Limits of Automation

JIRA reporting depends almost entirely on how well your team maintains ticket data. If estimates are missing, status updates are sporadic, or tickets aren’t properly closed, your Velocity Reports and Cycle Time tracking become noise. A team member who creates a ticket but never updates the status when work starts will artificially inflate Cycle Time. Someone who estimates a task at five points but completes it in one day will throw off your Velocity average. The limitation is significant: JIRA lacks built-in metric decomposition and offers no automated way to enforce ticket quality.

You can set up field requirements (for example, requiring an estimate before a ticket moves to “In Progress”), but you can’t easily audit whether those fields are accurate or whether the underlying process is sound. A team might appear to have healthy velocity while actually just getting better at optimistic estimation. The platform requires ongoing manual oversight to stay reliable. Some teams address this by appointing a “Scrum Master” or “Engineering Manager” who reviews tickets weekly, looking for missing information or inconsistencies. Others run periodic audits where the team revisits completed tickets and recalibrates estimates based on actual time spent. These practices add overhead but protect the integrity of your reporting.

Velocity Reports for Capacity Planning and Forecasting

Velocity Reports transform planning from a guessing game into math. Once you have three to five sprints of historical data, you can forecast with confidence. If your last five sprints averaged 52 story points completed, and your product roadmap needs 200 points of work, you can predict that roadmap will take approximately 3.8 sprints—about four weeks (assuming one-week sprints).

This lets product managers set realistic delivery dates and stakeholders understand when features will arrive. Real-world example: A platform team running two-week sprints tracked a Velocity average of 38 points over six sprints. When the leadership team asked when the payment system redesign (estimated at 120 points) could ship, the team confidently said “approximately three sprints, so six weeks.” They hit that timeline because their forecast was grounded in actual data, not optimism. Without Velocity Reports, they might have guessed “maybe four weeks” and disappointed everyone when it took six.

The Evolution of JIRA Reporting and Future Readiness

JIRA reporting capabilities continue to expand. Atlassian has added more visualization options, custom reporting, and integration with other tools. Teams now use JIRA reports not just for sprint planning but for roadmap forecasting, resource allocation, and even hiring decisions (if Velocity trends show you’re consistently overcommitted, it might be time to hire). The trend is toward making data more accessible to non-technical stakeholders, so product managers and executives can understand engineering capacity without interpreting charts.

Teams that build reporting discipline early gain a compound advantage. Three years of velocity history becomes a powerful forecasting tool. Cycle Time trends reveal whether your engineering practices are actually improving or just moving problems around. The teams that use JIRA reports strategically transform them from administrative overhead into a core part of how they operate.

Conclusion

JIRA reports improve team performance by replacing intuition with evidence. Velocity Reports reveal capacity, Burndown Charts show sprint progress in real-time, and Cycle Time tracking exposes bottlenecks. Teams that act on these insights—adjusting WIP limits, improving ticket discipline, and forecasting realistically—consistently deliver better results than teams that treat JIRA as merely a ticketing system. Start by ensuring your team consistently fills in estimates and updates ticket statuses.

Run a Velocity Report over the last few sprints and compare the trend to your forecasts. Look at your most recent Cycle Time data and ask whether any tasks spent surprising amounts of time in a particular status. Those insights will guide your next improvements. The goal isn’t perfect reporting—it’s using the data you already generate to become slightly smarter about how you work.